Siri support can be added using an Intent and an IntentUI extension. This adds quite some overhead and is not always the easiest way in a big project, as you need shared logic between the main app and the extension.

A much easier way to implement Siri support is by using NSUserActivity.

Asking Siri to open a page in your app

In this example, we’re implementing Siri support for opening a specific board in your app.

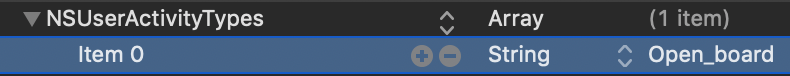

Start by adding the Open_board shortcut to your info.plist for the NSUserActivityTypes key.

After that, create an extension for the BoardDetailViewController which we like to open using Siri. For this, we need to import the Intents framework to make use of the suggestedInvocationPhrase property.

extension BoardDetailViewController {

func activateActivity(using board: Board) {

userActivity = NSUserActivity(activityType: "Open_board")

let title = "Open board \(board.title)"

userActivity?.title = title

userActivity?.userInfo = ["id": board.identifier]

userActivity?.suggestedInvocationPhrase = title

userActivity?.isEligibleForPrediction = true

userActivity?.persistentIdentifier = board.identifier

}

}This method needs to be called in the viewDidAppear method:

override func viewDidAppear(_ animated: Bool) {

super.viewDidAppear(animated)

activateActivity(using: board)

}We’ve set a few properties:

userActivity

This is an existing property onUIViewControllerand indicates that the app is currently performing a user activity.userActivity.userInfo

We set any needed information for deeplink to the page upon handling the Siri action. More about this later.userActivity.suggestedInvocationPhrase

This is the suggested sentence which is shown after “You can say something like…”userActivity?.persistentIdentifier

This unique identifier is needed to make it possible to delete the activity eventually.

FREE 5-Day Email Course: The Swift Concurrency Playbook

A FREE 5-day email course revealing the 5 biggest mistakes iOS developers make with with async/await that lead to App Store rejections And migration projects taking months instead of days (even if you've been writing Swift for years)

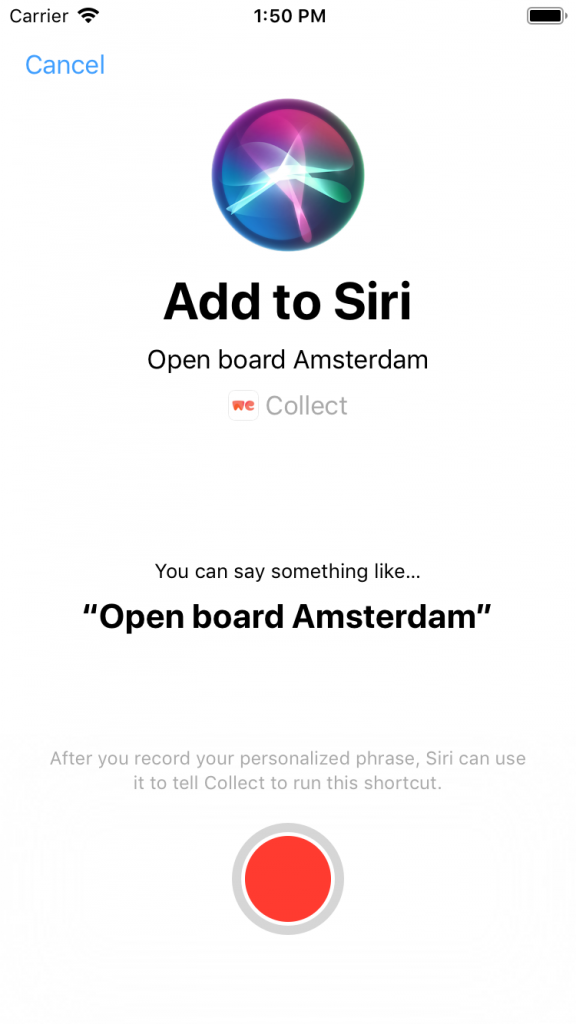

Presenting the Add Voice Shortcut ViewController

To make it possible for your user to record a custom shortcut, we’re going to present the INUIAddVoiceShortcutViewController which is available after importing the IntentsUI framework. In our example, we’re creating a button in our UI which executes the presentAddOpenBoardToSiriViewController method.

extension BoardDetailViewController {

func presentAddOpenBoardToSiriViewController() {

guard let userActivity = self.userActivity else { return }

let shortcut = INShortcut(userActivity: userActivity)

let viewController = INUIAddVoiceShortcutViewController(shortcut: shortcut)

viewController.modalPresentationStyle = .formSheet

viewController.delegate = self

present(viewController, animated: true, completion: nil)

}

}

extension BoardDetailViewController: INUIAddVoiceShortcutViewControllerDelegate {

func addVoiceShortcutViewController(_ controller: INUIAddVoiceShortcutViewController, didFinishWith voiceShortcut: INVoiceShortcut?, error: Error?) {

controller.dismiss(animated: true)

}

func addVoiceShortcutViewControllerDidCancel(_ controller: INUIAddVoiceShortcutViewController) {

controller.dismiss(animated: true)

}

}This will show a native iOS view with a record button for the user to create the custom Shortcut.

Handling an incoming shortcut

Whenever the Open Board shortcut is triggered it will open your app with the continue user activity AppDelegate method. This is where you have to match the activity with our Siri activity and open the specific page. In our example, we’re starting the deeplink action to the requested board.

func application(_ application: UIApplication, continue userActivity: NSUserActivity, restorationHandler: @escaping ([Any]?) -> Void) -> Bool {

if userActivity.activityType == "Open_board", let parameters = userActivity.userInfo as? [String: String] {

try? BoardDeeplinkAction(parameters: parameters, context: persistentContainer.viewContext).execute()

return true

}

return false

}And that’s about it!

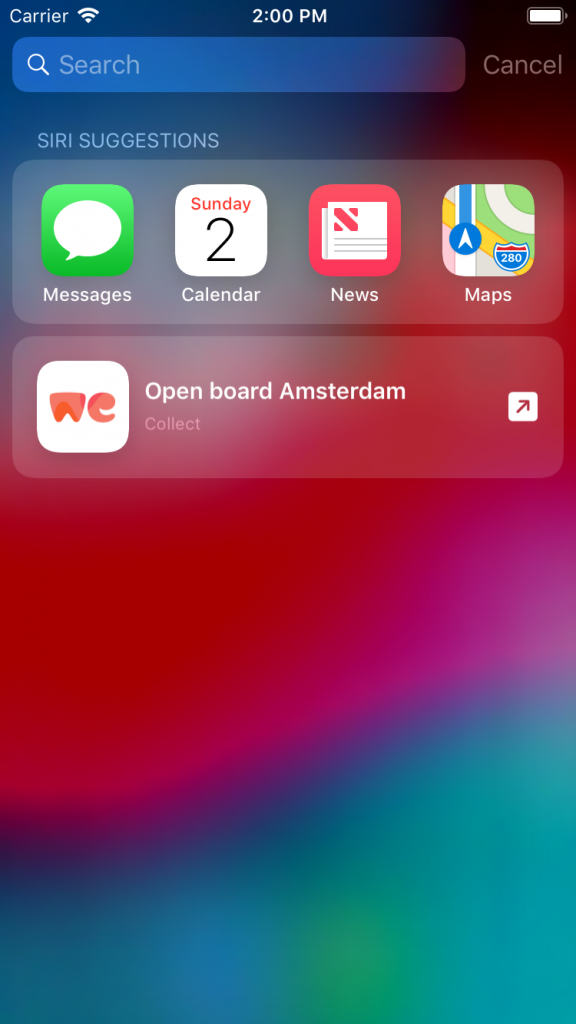

Eligible for prediction as a bonus

That’s right, eligible for prediction. We get some extra functionality for free, as the activity will be used throughout the system to predict a certain user flow. If the user is often opening the Amsterdam board in this example, it’s likely to show up in Siri suggestions.