Flaky tests can be frustrating to deal with. You’re ready to open your PR until you realize your tests fail on CI while they succeeded locally. You even realize your test is succeeding when executing individually, and you’re almost at a point to say, “Merging in as tests succeed locally.” Test repetitions sometimes allow you to reproduce them, but what if that doesn’t help?

Obviously, this is not how we should handle flaky tests. Instead, we can use several techniques to dive into the root cause of failing tests with the latest Xcode technologies. Test Repetitions, combined with several coding guidelines, can help you prevent and resolve randomly failing tests.

What are flaky tests?

A flaky test is a test that randomly succeeds and randomly fails. In other words: it’s an inconsistent test. They can occur on your local machine but more often occur on CI systems which are either slower environments or environments that start with a clean slate. To better understand flaky tests, we can go over a few common causes.

Race Conditions

Race conditions can result in unpredicted behavior. For example, if multiple threads access the same data and you’re not controlling which one is first, the order might differ between runs. As each run can be different, it can result in a test that sometimes fails and sometimes succeeds. Data races are explained in-depth in my article Thread Sanitizer explained: Data Races in Swift.

Environment assumptions

Your tests might require a specific configuration to succeed. For example, expecting a thumbnail target resizing size to be 200 width and 200 height. A test relying on the specific target size might suddenly fail if this configuration is changing. Thus, an unstable environment is often caused by global configurations, which brings me to the next cause:

Global State

Global state comes down to variables or instances that are globally available in your application’s code. If the global state is consistent, there’s not much risk to take into account. However, if your global state is mutable, you can easily run into trouble.

Mutable global configurations could be configured differently for different test classes, resulting in your tests failing in certain scenarios. I’ve often had results in which tests succeed when run individually as the global state was not affected. However, when I was running the complete test suite, the test could suddenly fail due to changing global configurations. Therefore, it’s important to keep your global configuration as stable as possible.

Communication with external services

The last common cause for flaky tests is communications with external services. I first want to recommend not to rely on external services by trying to mock those cases as much as possible, for example, by learning How to mock Alamofire and URLSession requests in Swift. However, this might not always be possible. Longer timeouts best handle those cases, but it will never be a stable foundation for consistent tests.

FREE 5-Day Email Course: The Swift Concurrency Playbook

A FREE 5-day email course revealing the 5 biggest mistakes iOS developers make with with async/await that lead to App Store rejections And migration projects taking months instead of days (even if you've been writing Swift for years)

Identifying flaky tests

You can identify flaky tests by their consistency. If a test fails all the time, it’s likely not a flaky test but just a failing test. I often identify flaky tests as a result of a failing CI run for tests that succeeded locally.

Solving flaky tests

It’s good to understand that the cause of flaky tests can be different every time. We just went over all the different causes, and each of those should be taken into account when taking a deep dive into a failing test. Before using any of the techniques I’m about to describe, I’d like to recommend cleaning up the local state.

Cleaning up the local environment

As mentioned earlier, flaky tests are often occurring when running on CI. A test suite on a CI system is often running after a clean build and after the derived data folder was cleared. It’s worth retesting the failing test locally after cleaning your build folder and derived data to ensure this is not causing the failure. For example, it could be that the device you’re running the tests on is configured with an unexpected global state.

Start by cleaning your local state as follows:

- Xcode:

Product → Clean Build Folderto clean your local build folder - Simulator:

Device ➞ Erase All Content and Settingsto reset the state of the Simulator

Rerun your test after doing so. If the test is still flaky, you can try whether deleting the derived data folder has any effect. You can find this location under Preferences → Locations.

Reproducing a flaky test locally using Test Repetitions

Xcode 13 introduced the option to run tests multiple times using Test Repetitions. This can be a great way to catch flaky tests that are inconsistent. However, it’s important to understand that this technique won’t catch tests failing due to changed global configuration mismatching expectations. I’ll explain later how you can solve these cases.

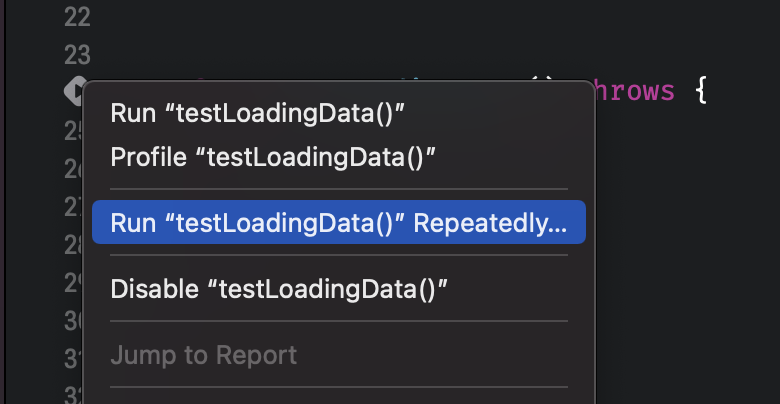

Test Repetitions can be configured by right-clicking the test run button in Xcode and selecting Run <testname> Repeatedly...:

Doing so will open a configuration window to configure how you want to run your tests repeatedly. There are currently three options:

- Stop after failure

The test will repeatedly run until it finally fails. You can use this as a basic approach to see if you can reproduce the inconsistency locally and stop using the debugger. - Stop after success

The test will run until it succeeds. This can be useful for tests that fail initially but eventually succeed. - Maximum Repetitions

Use this mode to get a fixed set of runs to give you valuable insights into the consistency of the test.

In all cases, the maximum repetitions is used as a limit to prevent tests from being executed indefinitely.

The Pause on Failure option will make the debugger stop once an assertion fails. This is extremely useful as it will enable you to use the debugger and dig into the root cause of the failing test.

Getting sample data from CI

If you’re unable to reproduce the failing test locally, getting extra sample data from your CI runs can be useful. You can do this by passing in the following parameters to xcodebuild:

-test-iterations 10

-retry-tests-on-failureThis will retry your failing tests a maximum of 10 times and give you insights like whether your test fails consistently in those cases or just occasionally. Note that I configured it to run a maximum of 10 times as your CI execution time might be valuable. Also, 10 iterations are often enough to get extra sample data and insights into the cause of the failure.

Ensuring consistent global state and configurations

If you’re unable to reproduce the flaky test locally, it might be caused by a different system configuration or by a global state that is unexpectedly changed. There’s not really a specific answer to the problem in those cases, but I can at least give your advice in preventing these cases. This will also give you insights into where the test failure is caused.

After following my article on Unit tests best practices in Xcode and Swift, you might already do this correctly. Of course, it’s important to configure the global state for your unit tests in the methods, but it’s even more important to bring back the state to its default inside the tearDown methods. Doing so ensures that your tests are always running in the same state, making them more likely to produce consistent results.

If your test is executing asynchronous jobs, make sure to either wait for those to finish or to cancel those accordingly inside the tear-down methods.

Verifying failure is not caused by race conditions

Lastly, it’s good to ensure you’re not dealing with data races. Race conditions can cause unexpected and inconsistent behavior and can be found using the Thread Sanitizer. This technique is explained in detail in my article Thread Sanitizer explained: Data Races in Swift.

Conclusion

Inconsistent test results can be frustrating and time-consuming. By using the right techniques and best practices, you’ll be better at preventing yourself from running into flaky tests while at the same time being able to solve them faster.

If you like to learn more tips on debugging, check out the debugging category page. Feel free to contact me or tweet to me on Twitter if you have any additional tips or feedback.

Thanks!